Search Knowledge Base by Keyword

The Trinity Moment: When a Local AI Model, altFINS CLI, and an M1 Max Started Working Like One Tool

Local AI • altFINS CLI • M1 Max

A field report on what happens when Codex, a local qwen3.6:35b-a3b-coding-nvfp4 model, and the altFINS af CLI stop being separate tools and start behaving like one workflow. The whole pipeline ran on a single M1 Max laptop, found a structured shortlist of 13 crypto assets, and cost less than a cent in electricity.

| Memory Bandwidth400 GB/s | Inference Speed~60 t/s | Qualified Assets13 | Electricity Cost< €0.01 |

A Better Kind of AI Feeling

There is a specific emotional jolt when a local model stops sounding clever and starts acting competent. That was the moment here. Not because the model delivered one dramatic answer, but because it could actually work: inspect the CLI, choose commands, run filters, survive a failed path, and keep the chain together.

The experiment was simple to describe and surprisingly non-trivial to execute: identify major crypto assets that were still below overbought territory, already above their 50-day moving average, and backed by recent bullish momentum. Then pull fresh news so the shortlist had a sentiment layer and not only technicals.

What the Experiment Actually Found

The workflow started with the top 100 assets by market cap. From there, the model and CLI stack narrowed the field in steps:

- 86 assets had RSI14 below 70.

- 13 of those 86 were trading above SMA50.

- All 13 had recent bullish signals since May 3, 2026.

- Recent news since May 3, 2026 was pulled as a sentiment confirmation layer.

| # | Symbol | RSI14 | Price | SMA50 | Above 50MA |

|---|---|---|---|---|---|

| 1 | BTC | 62.0 | $79,963 | $73,247 | +9.2% |

| 2 | ETH | 49.8 | $2,283 | $2,222 | +2.7% |

| 3 | BNB | 55.9 | $641 | $621 | +3.2% |

| 4 | SOL | 58.7 | $89 | $85 | +4.7% |

| 5 | XRP | 47.7 | $1.39 | $1.38 | +0.4% |

The remaining qualifying names were DOGE, ETC, XMR, PEPE, LINK, ADA, AVAX, UNI, LTC, and DOT. That is a much more useful output than “the market is bullish.” It is a shortlist with structure.

Why qwen3.6:35b-a3b-coding-nvfp4 Was the Better Fit Than Gemma 4

This is not a sweeping benchmark claim. It is a workflow claim. On short, self-contained prompts, lots of models feel fast. On a multi-step analytical chain with intermediate symbol lists, command syntax, fallback logic, and several rounds of tool output, context discipline matters more.

For this job, qwen3.6:35b-a3b-coding-nvfp4 held together better than Gemma 4 because the session was not one question. It was a sequence of sub-results that kept rewriting the next step. That is where long-context handling pays for itself.

One captured terminal moment makes that concrete: the agent first tried to grab SMA50 across all 86 RSI-qualified assets, hit a messy output path, then switched into a narrower top-30 route and symbol-by-symbol checks. That is the kind of controlled adjustment you want in a real coding workflow.

Why the M1 Max Made This Feel Legit

The M1 Max is not magic. It is bandwidth. Apple Silicon’s unified memory design gives this class of local model room to breathe, and the headline number that matters is up to 400 GB/s.

On this setup, the observed speed was roughly 60 tokens per second. That is fast enough that the loop feels interactive. You can request a filter, inspect the result, and push into the next step without the whole exercise collapsing into latency theater.

Heat, Time, and the Price of Intelligence

The laptop ran hot. That is part of the honest version of the story. But the economics were still ridiculous in the best way.

So yes, it felt like a 15-minute burst of digital brain power. But the measured core run was tighter than that. Either way, the electricity cost still lands below a cent, which is the part that makes cloud comparisons feel almost silly.

How Ollama and the CLI Actually Connect

The clean mental model is this: the model does not browse the market on its own. The model reasons locally. The agent layer exposes tools. The af CLI is what actually reaches live altFINS endpoints.

export OLLAMA_HOST=0.0.0.0 export OLLAMA_ORIGINS="*" ollama serve

Those launch variables matter because they make the local Ollama server reachable to the tooling layer coordinating the session. That is how “I can use altFINS CLI” turns from a slogan into an operational fact.

The Command Chain

For anyone who wants to reproduce the workflow, this is the sequence the agent ran end to end:

af markets search --size 100 -o json af analytics history --symbol <SYM> --type RSI14 --size 1 -o json af analytics history --symbol <SYM> --type SMA50 --size 1 -o json af signals list --symbols <SYM> --direction BULLISH --from 2026-05-03 --size 5 -o json af news list --from 2026-05-03 --size 500 -o json

More Than a Finance Trick

I also tested this setup on simple HTML, JavaScript, and CSS work, including lightweight games and simulations. That was a useful confidence check. But the real payoff was this financial workflow, because it mixed scripting, ranking, external data access, retries, and explanation in one place.

That is the point where local AI stops feeling like a novelty and starts feeling like infrastructure for individual analysts and traders.

Session Snapshot

| Model | qwen3.6:35b-a3b-coding-nvfp4 |

| Host Runtime | Ollama on Apple Silicon M1 Max |

| Observed Style | Tool-driven, long-context coding workflow |

| Data Source | altFINS CLI (af) |

| Qualified Shortlist | BTC, ETH, BNB, SOL, XRP, DOGE, ETC, XMR, PEPE, LINK, ADA, AVAX, UNI, LTC, DOT |

Final Thought

The real story is not that AI “picked coins.” The real story is that a local model on a prosumer laptop successfully ran an analyst workflow: inspect, filter, retry, verify, and explain. Once that stack can really use af, the feeling changes immediately.

It is not abstract anymore. It is a warm M1 Max on a desk, a real CLI, a real shortlist, and a bill that barely exists.

Explore CLI documentation.

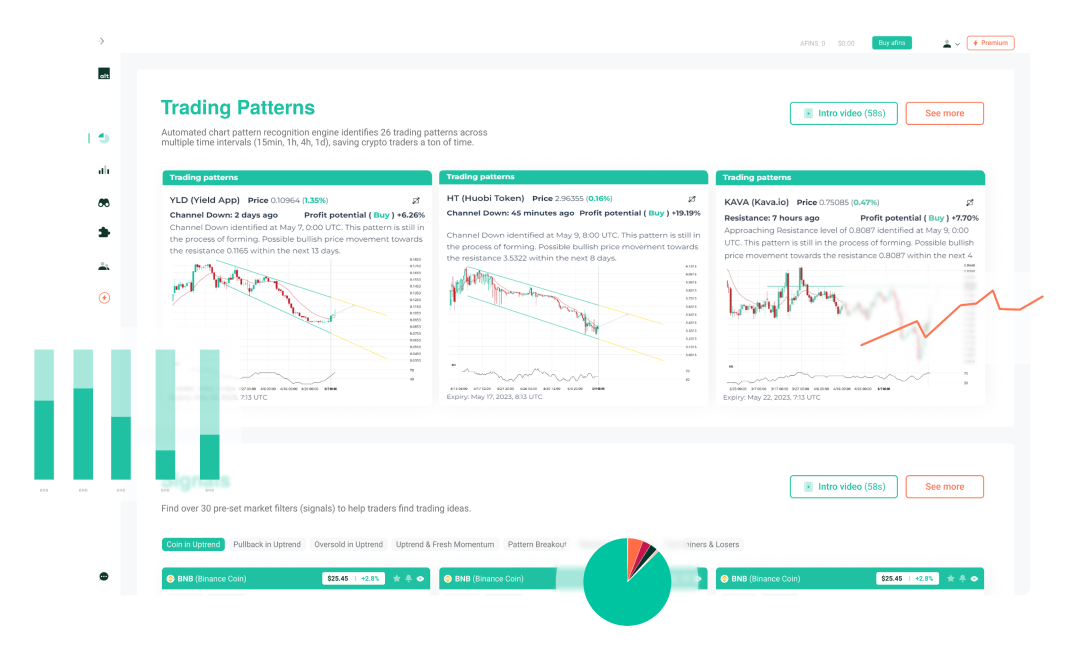

Explore signals, screeners, and AI-driven crypto analytics on altFINS.com.